Using a webcam connected to a Windows, Linux or Mac OSX computer to detect the heart-rate of an individual sounds impossible but is infact very much possible and that too for free. Thanks to the awesome free and open-source cross-platform python code "webcam-pulse-detector" available at Github, users can achieve this within minutes.

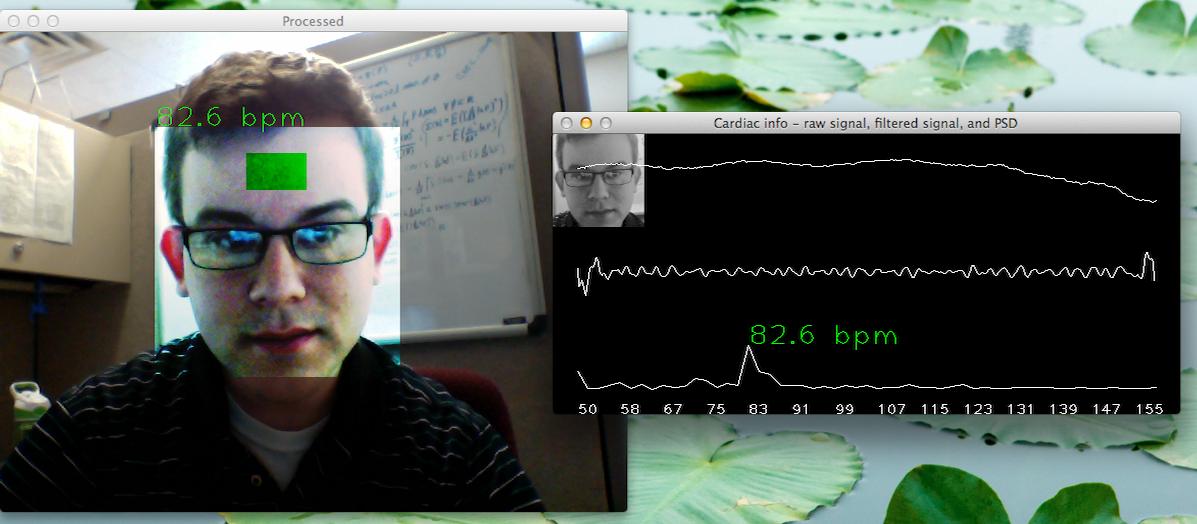

How it works:This application uses OpenCV to find the location of the user's face, then isolate the forehead region. Data is collected from this location over time to estimate the user's heart rate. This is done by measuring average optical intensity in the forehead location, in the subimage's green channel alone (a better color mixing ratio may exist, but the blue channel tends to be very noisy). Physiological data can be estimated this way thanks to the optical absorbtion characteristics of (oxy-) hemoglobin (see http://www.opticsinfobase.org/oe/abstract.cfm?uri=oe-16-26-21434).

With good lighting and minimal noise due to motion, a stable heartbeat should be isolated in about 15 seconds. Other physiological waveforms (such as Mayer waves) should also be visible in the raw data stream.

Once the user's hear rate has been estimated, real-time phase variation associated with this frequency is also computed. This allows for the heartbeat to be exaggerated in the post-process frame rendering, causing the highlighted forhead location to pulse in sync with the user's own heartbeat.

Support for detection on multiple simultaneous individuals in a single camera's image stream is definitely possible, but at the moment only the information from one face is extracted for analysis.

Comments

Sharing a great cross

Sharing a great cross platform mobile app, I hope this app becomes a one of the most successful app in healthcare sector and doctor's first choice. I have also one question is webcam pulse monitor support for Android platform or not.

Add new comment